Note

Click here to download the full example code

When to parallelize?#

That is the question. Parallize computation takes some time to set up, it is not the right solution in every case. The following example studies the parallelism introduced into the runtime of TreeEnsembleRegressor to see when it is best to do it.

from pprint import pprint

import numpy

from pandas import DataFrame

import matplotlib.pyplot as plt

from tqdm import tqdm

from sklearn import config_context

from sklearn.datasets import make_regression

from sklearn.ensemble import HistGradientBoostingRegressor

from sklearn.model_selection import train_test_split

from cpyquickhelper.numbers import measure_time

from pyquickhelper.pycode.profiling import profile

from mlprodict.onnx_conv import to_onnx, register_rewritten_operators

from mlprodict.onnxrt import OnnxInference

from mlprodict.tools.model_info import analyze_model

Available optimisations on this machine.

from mlprodict.testing.experimental_c_impl.experimental_c import code_optimisation

print(code_optimisation())

AVX-omp=8

Training and converting a model#

data = make_regression(50000, 20)

X, y = data

X_train, X_test, y_train, y_test = train_test_split(X, y)

hgb = HistGradientBoostingRegressor(max_iter=100, max_depth=6)

hgb.fit(X_train, y_train)

print(hgb)

HistGradientBoostingRegressor(max_depth=6)

Let’s get more statistics about the model itself.

pprint(analyze_model(hgb))

{'_predictors.max|tree_.max_depth': 6,

'_predictors.size': 100,

'_predictors.sum|tree_.leave_count': 3100,

'_predictors.sum|tree_.node_count': 6100,

'train_score_.shape': 101,

'validation_score_.shape': 101}

And let’s convert it.

register_rewritten_operators()

onx = to_onnx(hgb, X_train[:1].astype(numpy.float32))

oinf = OnnxInference(onx, runtime='python_compiled')

print(oinf)

OnnxInference(...)

def compiled_run(dict_inputs, yield_ops=None, context=None, attributes=None):

if yield_ops is not None:

raise NotImplementedError('yields_ops should be None.')

# inputs

X = dict_inputs['X']

(variable, ) = n0_treeensembleregressor_3(X)

return {

'variable': variable,

}

The runtime of the forest is in the following object.

print(oinf.sequence_[0].ops_)

print(oinf.sequence_[0].ops_.rt_)

TreeEnsembleRegressor_3(

op_type=TreeEnsembleRegressor

aggregate_function=b'SUM',

base_values=[0.62794507],

base_values_as_tensor=[],

domain=ai.onnx.ml,

inplaces={},

ir_version=8,

n_targets=1,

nodes_falsenodeids=[34 17 10 ... 60 0 0],

nodes_featureids=[12 18 13 ... 4 0 0],

nodes_hitrates=[1. 1. 1. ... 1. 1. 1.],

nodes_hitrates_as_tensor=[],

nodes_missing_value_tracks_true=[1 1 1 ... 1 0 0],

nodes_modes=[b'BRANCH_LEQ' b'BRANCH_LEQ' b'BRANCH_LEQ' ... b'BRANCH_LEQ' b'LEAF'

b'LEAF'],

nodes_nodeids=[ 0 1 2 ... 58 59 60],

nodes_treeids=[ 0 0 0 ... 99 99 99],

nodes_truenodeids=[ 1 2 3 ... 59 0 0],

nodes_values=[0.21894096 0.06143481 0.02431714 ... 0.15920539 0. 0. ],

nodes_values_as_tensor=[],

parallel=(60, 128, 20),

post_transform=b'NONE',

runtime=None,

target_ids=[0 0 0 ... 0 0 0],

target_nodeids=[ 4 6 8 ... 57 59 60],

target_opset=3,

target_treeids=[ 0 0 0 ... 99 99 99],

target_weights=[-25.663 -19.885317 -16.915827 ... 1.1101708 1.9407381

3.5393353],

target_weights_as_tensor=[],

)

<mlprodict.onnxrt.ops_cpu.op_tree_ensemble_regressor_p_.RuntimeTreeEnsembleRegressorPFloat object at 0x7f3e2869e0f0>

And the threshold used to start parallelizing based on the number of observations.

print(oinf.sequence_[0].ops_.rt_.omp_N_)

20

Profiling#

This step involves pyinstrument to measure where the time is spent. Both scikit-learn and mlprodict runtime are called so that the prediction times can be compared.

X32 = X_test.astype(numpy.float32)

def runlocal():

with config_context(assume_finite=True):

for i in range(0, 100):

oinf.run({'X': X32[:1000]})

hgb.predict(X_test[:1000])

print("profiling...")

txt = profile(runlocal, pyinst_format='text')

print(txt[1])

profiling...

_ ._ __/__ _ _ _ _ _/_ Recorded: 04:41:12 AM Samples: 6069

/_//_/// /_\ / //_// / //_'/ // Duration: 84.956 CPU time: 584.970

/ _/ v4.4.0

Program: somewhere/workspace/mlprodict/mlprodict_UT_39_std/_doc/examples/plot_parallelism.py

84.937 profile ../pycode/profiling.py:455

`- 84.937 runlocal plot_parallelism.py:91

[42 frames hidden] plot_parallelism, sklearn, <built-in>...

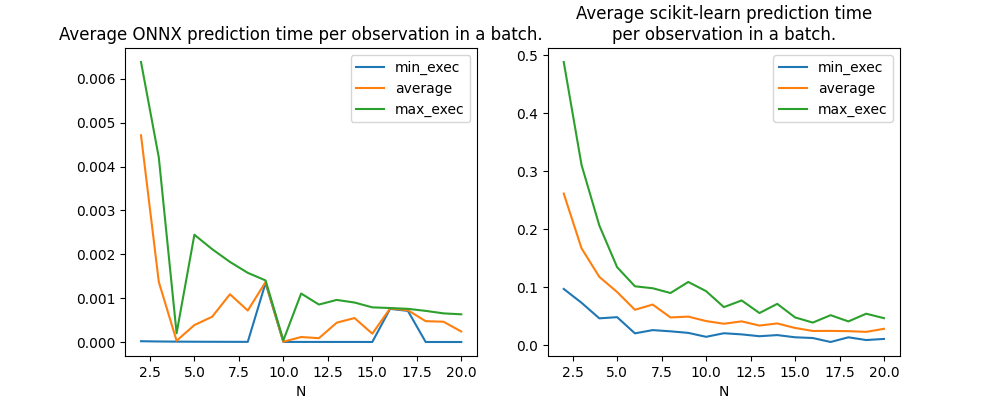

Now let’s measure the performance the average computation time per observations for 2 to 100 observations. The runtime implemented in mlprodict parallizes the computation after a given number of observations.

obs = []

for N in tqdm(list(range(2, 21))):

m = measure_time("oinf.run({'X': x})",

{'oinf': oinf, 'x': X32[:N]},

div_by_number=True,

number=20)

m['N'] = N

m['RT'] = 'ONNX'

obs.append(m)

with config_context(assume_finite=True):

m = measure_time("hgb.predict(x)",

{'hgb': hgb, 'x': X32[:N]},

div_by_number=True,

number=15)

m['N'] = N

m['RT'] = 'SKL'

obs.append(m)

df = DataFrame(obs)

num = ['min_exec', 'average', 'max_exec']

for c in num:

df[c] /= df['N']

df.head()

0%| | 0/19 [00:00<?, ?it/s]

5%|5 | 1/19 [01:20<24:05, 80.30s/it]

11%|# | 2/19 [02:36<22:01, 77.73s/it]

16%|#5 | 3/19 [03:46<19:51, 74.46s/it]

21%|##1 | 4/19 [04:55<18:04, 72.29s/it]

26%|##6 | 5/19 [05:51<15:26, 66.21s/it]

32%|###1 | 6/19 [07:06<14:58, 69.14s/it]

37%|###6 | 7/19 [08:04<13:06, 65.58s/it]

42%|####2 | 8/19 [09:12<12:11, 66.54s/it]

47%|####7 | 9/19 [10:14<10:51, 65.13s/it]

53%|#####2 | 10/19 [11:15<09:34, 63.80s/it]

58%|#####7 | 11/19 [12:29<08:54, 66.81s/it]

63%|######3 | 12/19 [13:35<07:47, 66.74s/it]

68%|######8 | 13/19 [14:55<07:04, 70.67s/it]

74%|#######3 | 14/19 [16:02<05:47, 69.52s/it]

79%|#######8 | 15/19 [17:03<04:27, 66.84s/it]

84%|########4 | 16/19 [18:07<03:18, 66.17s/it]

89%|########9 | 17/19 [19:13<02:12, 66.18s/it]

95%|#########4| 18/19 [20:20<01:06, 66.26s/it]

100%|##########| 19/19 [21:45<00:00, 71.83s/it]

100%|##########| 19/19 [21:45<00:00, 68.70s/it]

Graph.

fig, ax = plt.subplots(1, 2, figsize=(10, 4))

df[df.RT == 'ONNX'].set_index('N')[num].plot(ax=ax[0])

ax[0].set_title("Average ONNX prediction time per observation in a batch.")

df[df.RT == 'SKL'].set_index('N')[num].plot(ax=ax[1])

ax[1].set_title(

"Average scikit-learn prediction time\nper observation in a batch.")

Text(0.5, 1.0, 'Average scikit-learn prediction time\nper observation in a batch.')

Gain from parallelization#

There is a clear gap between after and before 10 observations when it is parallelized. Does this threshold depends on the number of trees in the model? For that we compute for each model the average prediction time up to 10 and from 10 to 20.

def parallized_gain(df):

df = df[df.RT == 'ONNX']

df10 = df[df.N <= 10]

t10 = sum(df10['average']) / df10.shape[0]

df10p = df[df.N > 10]

t10p = sum(df10p['average']) / df10p.shape[0]

return t10 / t10p

print('gain', parallized_gain(df))

gain 2.8027269425105525

Measures based on the number of trees#

We trained many models with different number of trees to see how the parallelization gain is moving. One models is trained for every distinct number of trees and then the prediction time is measured for different number of observations.

tries_set = [2, 5, 8] + list(range(10, 50, 5)) + list(range(50, 101, 10))

tries = [(nb, N) for N in range(2, 21, 2) for nb in tries_set]

training

models = {100: (hgb, oinf)}

for nb in tqdm(set(_[0] for _ in tries)):

if nb not in models:

hgb = HistGradientBoostingRegressor(max_iter=nb, max_depth=6)

hgb.fit(X_train, y_train)

onx = to_onnx(hgb, X_train[:1].astype(numpy.float32))

oinf = OnnxInference(onx, runtime='python_compiled')

models[nb] = (hgb, oinf)

0%| | 0/17 [00:00<?, ?it/s]

6%|5 | 1/17 [00:04<01:12, 4.52s/it]

12%|#1 | 2/17 [00:50<07:14, 29.00s/it]

24%|##3 | 4/17 [01:02<03:08, 14.53s/it]

29%|##9 | 5/17 [02:24<07:04, 35.39s/it]

35%|###5 | 6/17 [02:29<04:47, 26.16s/it]

41%|####1 | 7/17 [03:09<05:03, 30.36s/it]

47%|####7 | 8/17 [03:18<03:33, 23.75s/it]

53%|#####2 | 9/17 [04:09<04:17, 32.18s/it]

59%|#####8 | 10/17 [04:27<03:14, 27.83s/it]

65%|######4 | 11/17 [05:53<04:31, 45.19s/it]

71%|####### | 12/17 [07:07<04:30, 54.08s/it]

76%|#######6 | 13/17 [07:39<03:08, 47.19s/it]

82%|########2 | 14/17 [08:08<02:05, 41.80s/it]

88%|########8 | 15/17 [09:37<01:51, 55.99s/it]

94%|#########4| 16/17 [10:44<00:59, 59.24s/it]

100%|##########| 17/17 [11:14<00:00, 50.44s/it]

100%|##########| 17/17 [11:14<00:00, 39.65s/it]

prediction time

obs = []

for nb, N in tqdm(tries):

hgb, oinf = models[nb]

m = measure_time("oinf.run({'X': x})",

{'oinf': oinf, 'x': X32[:N]},

div_by_number=True,

number=50)

m['N'] = N

m['nb'] = nb

m['RT'] = 'ONNX'

obs.append(m)

df = DataFrame(obs)

num = ['min_exec', 'average', 'max_exec']

for c in num:

df[c] /= df['N']

df.head()

0%| | 0/170 [00:00<?, ?it/s]

5%|4 | 8/170 [00:00<00:02, 73.40it/s]

9%|9 | 16/170 [00:08<01:32, 1.67it/s]

12%|#1 | 20/170 [00:08<01:08, 2.20it/s]

16%|#5 | 27/170 [00:08<00:38, 3.72it/s]

18%|#8 | 31/170 [00:09<00:32, 4.26it/s]

20%|## | 34/170 [00:13<01:07, 2.03it/s]

24%|##4 | 41/170 [00:13<00:37, 3.41it/s]

27%|##7 | 46/170 [00:13<00:26, 4.71it/s]

29%|##9 | 50/170 [00:23<01:33, 1.28it/s]

31%|###1 | 53/170 [00:24<01:21, 1.43it/s]

35%|###4 | 59/170 [00:24<00:48, 2.27it/s]

37%|###7 | 63/170 [00:24<00:35, 3.03it/s]

39%|###9 | 67/170 [00:30<01:06, 1.55it/s]

41%|####1 | 70/170 [00:37<01:36, 1.03it/s]

45%|####4 | 76/170 [00:37<00:55, 1.68it/s]

47%|####7 | 80/170 [00:37<00:39, 2.27it/s]

49%|####8 | 83/170 [00:38<00:40, 2.15it/s]

50%|##### | 85/170 [00:45<01:21, 1.04it/s]

54%|#####3 | 91/170 [00:45<00:43, 1.80it/s]

56%|#####5 | 95/170 [00:45<00:30, 2.49it/s]

58%|#####7 | 98/170 [00:45<00:22, 3.16it/s]

59%|#####9 | 101/170 [00:52<00:52, 1.30it/s]

61%|###### | 103/170 [00:54<00:57, 1.16it/s]

64%|######4 | 109/170 [00:54<00:29, 2.08it/s]

66%|######5 | 112/170 [00:54<00:21, 2.69it/s]

68%|######7 | 115/170 [00:55<00:15, 3.49it/s]

69%|######9 | 118/170 [01:00<00:35, 1.47it/s]

71%|####### | 120/170 [01:03<00:44, 1.12it/s]

74%|#######4 | 126/170 [01:03<00:21, 2.07it/s]

76%|#######5 | 129/170 [01:03<00:15, 2.69it/s]

78%|#######7 | 132/170 [01:04<00:10, 3.49it/s]

79%|#######9 | 135/170 [01:10<00:27, 1.27it/s]

81%|######## | 137/170 [01:13<00:30, 1.07it/s]

84%|########3 | 142/170 [01:13<00:15, 1.83it/s]

85%|########5 | 145/170 [01:13<00:10, 2.44it/s]

87%|########7 | 148/170 [01:13<00:06, 3.23it/s]

89%|########8 | 151/170 [01:18<00:12, 1.52it/s]

90%|######### | 153/170 [01:25<00:22, 1.32s/it]

94%|#########3| 159/170 [01:26<00:07, 1.42it/s]

95%|#########5| 162/170 [01:26<00:04, 1.87it/s]

97%|#########7| 165/170 [01:26<00:02, 2.46it/s]

99%|#########8| 168/170 [01:33<00:01, 1.04it/s]

100%|##########| 170/170 [01:34<00:00, 1.12it/s]

100%|##########| 170/170 [01:34<00:00, 1.79it/s]

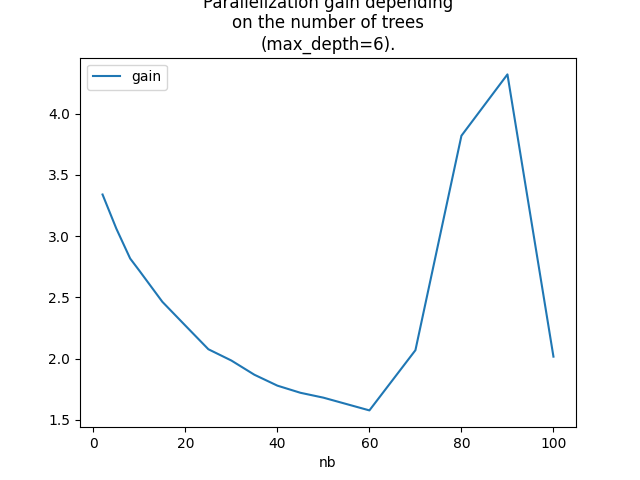

Let’s compute the gains.

gains = []

for nb in set(df['nb']):

gain = parallized_gain(df[df.nb == nb])

gains.append(dict(nb=nb, gain=gain))

dfg = DataFrame(gains)

dfg = dfg.sort_values('nb').reset_index(drop=True).copy()

dfg

Graph.

ax = dfg.set_index('nb').plot()

ax.set_title(

"Parallelization gain depending\non the number of trees\n(max_depth=6).")

Text(0.5, 1.0, 'Parallelization gain depending\non the number of trees\n(max_depth=6).')

That does not answer the question we are looking for

as we would like to know the best threshold th

which defines the number of observations for which

we should parallelized. This number depends on the number

of trees. A gain > 1 means the parallization should happen

Here, even two observations is ok.

Let’s check with lighter trees (max_depth=2),

maybe in that case, the conclusion is different.

models = {100: (hgb, oinf)}

for nb in tqdm(set(_[0] for _ in tries)):

if nb not in models:

hgb = HistGradientBoostingRegressor(max_iter=nb, max_depth=2)

hgb.fit(X_train, y_train)

onx = to_onnx(hgb, X_train[:1].astype(numpy.float32))

oinf = OnnxInference(onx, runtime='python_compiled')

models[nb] = (hgb, oinf)

obs = []

for nb, N in tqdm(tries):

hgb, oinf = models[nb]

m = measure_time("oinf.run({'X': x})",

{'oinf': oinf, 'x': X32[:N]},

div_by_number=True,

number=50)

m['N'] = N

m['nb'] = nb

m['RT'] = 'ONNX'

obs.append(m)

df = DataFrame(obs)

num = ['min_exec', 'average', 'max_exec']

for c in num:

df[c] /= df['N']

df.head()

0%| | 0/17 [00:00<?, ?it/s]

6%|5 | 1/17 [00:01<00:18, 1.18s/it]

12%|#1 | 2/17 [00:05<00:49, 3.27s/it]

24%|##3 | 4/17 [00:07<00:23, 1.83s/it]

29%|##9 | 5/17 [00:21<01:05, 5.45s/it]

35%|###5 | 6/17 [00:24<00:50, 4.62s/it]

41%|####1 | 7/17 [00:34<01:03, 6.37s/it]

47%|####7 | 8/17 [00:37<00:48, 5.43s/it]

53%|#####2 | 9/17 [00:48<00:55, 6.99s/it]

59%|#####8 | 10/17 [00:52<00:42, 6.03s/it]

65%|######4 | 11/17 [01:07<00:52, 8.68s/it]

71%|####### | 12/17 [01:16<00:43, 8.79s/it]

76%|#######6 | 13/17 [01:19<00:29, 7.28s/it]

82%|########2 | 14/17 [01:27<00:21, 7.25s/it]

88%|########8 | 15/17 [01:46<00:21, 10.93s/it]

94%|#########4| 16/17 [01:55<00:10, 10.39s/it]

100%|##########| 17/17 [02:02<00:00, 9.19s/it]

100%|##########| 17/17 [02:02<00:00, 7.18s/it]

0%| | 0/170 [00:00<?, ?it/s]

5%|4 | 8/170 [00:00<00:02, 77.72it/s]

9%|9 | 16/170 [00:04<00:47, 3.27it/s]

12%|#1 | 20/170 [00:05<00:47, 3.14it/s]

16%|#6 | 28/170 [00:05<00:25, 5.59it/s]

19%|#8 | 32/170 [00:11<01:06, 2.06it/s]

21%|## | 35/170 [00:17<01:48, 1.24it/s]

25%|##5 | 43/170 [00:17<00:57, 2.20it/s]

28%|##8 | 48/170 [00:23<01:22, 1.47it/s]

30%|### | 51/170 [00:30<01:57, 1.01it/s]

35%|###4 | 59/170 [00:30<01:03, 1.74it/s]

38%|###8 | 65/170 [00:31<00:47, 2.23it/s]

40%|#### | 68/170 [00:34<00:52, 1.96it/s]

44%|####4 | 75/170 [00:34<00:30, 3.10it/s]

48%|####7 | 81/170 [00:34<00:20, 4.42it/s]

50%|##### | 85/170 [00:37<00:31, 2.67it/s]

54%|#####4 | 92/170 [00:37<00:18, 4.11it/s]

57%|#####7 | 97/170 [00:37<00:13, 5.48it/s]

60%|###### | 102/170 [00:44<00:33, 2.02it/s]

64%|######4 | 109/170 [00:44<00:19, 3.09it/s]

67%|######7 | 114/170 [00:44<00:13, 4.12it/s]

70%|####### | 119/170 [00:51<00:29, 1.75it/s]

74%|#######4 | 126/170 [00:52<00:16, 2.67it/s]

77%|#######7 | 131/170 [00:52<00:10, 3.56it/s]

80%|######## | 136/170 [00:58<00:18, 1.87it/s]

84%|########4 | 143/170 [00:58<00:09, 2.86it/s]

87%|########7 | 148/170 [00:58<00:05, 3.80it/s]

89%|########9 | 152/170 [01:05<00:10, 1.66it/s]

91%|#########1| 155/170 [01:05<00:07, 1.91it/s]

95%|#########4| 161/170 [01:05<00:03, 2.94it/s]

97%|#########7| 165/170 [01:06<00:01, 3.85it/s]

99%|#########9| 169/170 [01:13<00:00, 1.46it/s]

100%|##########| 170/170 [01:14<00:00, 2.30it/s]

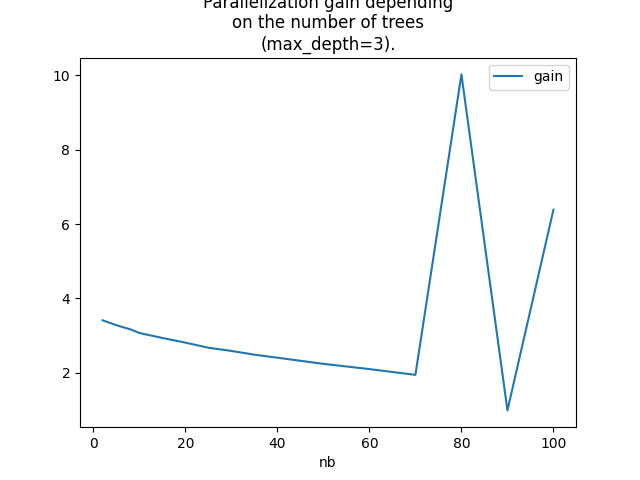

Measures.

gains = []

for nb in set(df['nb']):

gain = parallized_gain(df[df.nb == nb])

gains.append(dict(nb=nb, gain=gain))

dfg = DataFrame(gains)

dfg = dfg.sort_values('nb').reset_index(drop=True).copy()

dfg

Graph.

ax = dfg.set_index('nb').plot()

ax.set_title(

"Parallelization gain depending\non the number of trees\n(max_depth=3).")

Text(0.5, 1.0, 'Parallelization gain depending\non the number of trees\n(max_depth=3).')

The conclusion is somewhat the same but it shows that the bigger the number of trees is the bigger the gain is and under the number of cores of the processor.

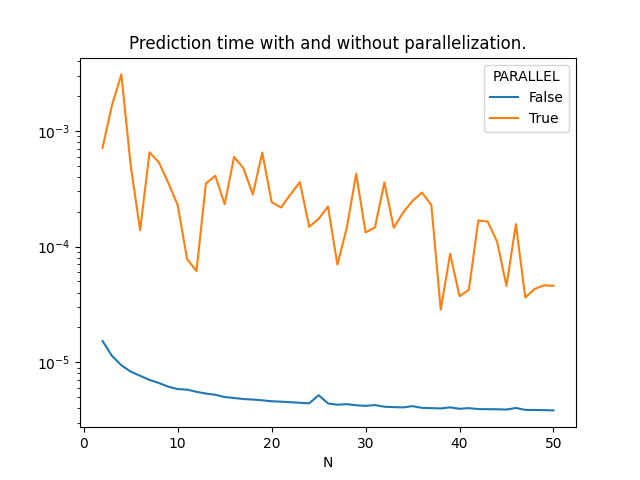

Moving the theshold#

The last experiment consists in comparing the prediction time with or without parallelization for different number of observation.

hgb = HistGradientBoostingRegressor(max_iter=40, max_depth=6)

hgb.fit(X_train, y_train)

onx = to_onnx(hgb, X_train[:1].astype(numpy.float32))

oinf = OnnxInference(onx, runtime='python_compiled')

obs = []

for N in tqdm(list(range(2, 51))):

oinf.sequence_[0].ops_.rt_.omp_N_ = 100

m = measure_time("oinf.run({'X': x})",

{'oinf': oinf, 'x': X32[:N]},

div_by_number=True,

number=20)

m['N'] = N

m['RT'] = 'ONNX'

m['PARALLEL'] = False

obs.append(m)

oinf.sequence_[0].ops_.rt_.omp_N_ = 1

m = measure_time("oinf.run({'X': x})",

{'oinf': oinf, 'x': X32[:N]},

div_by_number=True,

number=50)

m['N'] = N

m['RT'] = 'ONNX'

m['PARALLEL'] = True

obs.append(m)

df = DataFrame(obs)

num = ['min_exec', 'average', 'max_exec']

for c in num:

df[c] /= df['N']

df.head()

0%| | 0/49 [00:00<?, ?it/s]

2%|2 | 1/49 [00:00<00:34, 1.39it/s]

4%|4 | 2/49 [00:03<01:23, 1.77s/it]

6%|6 | 3/49 [00:09<02:53, 3.78s/it]

8%|8 | 4/49 [00:10<02:05, 2.78s/it]

10%|# | 5/49 [00:11<01:25, 1.93s/it]

12%|#2 | 6/49 [00:13<01:28, 2.06s/it]

14%|#4 | 7/49 [00:15<01:27, 2.09s/it]

16%|#6 | 8/49 [00:17<01:19, 1.94s/it]

18%|#8 | 9/49 [00:18<01:07, 1.69s/it]

20%|## | 10/49 [00:18<00:50, 1.31s/it]

22%|##2 | 11/49 [00:19<00:38, 1.02s/it]

24%|##4 | 12/49 [00:21<00:52, 1.41s/it]

27%|##6 | 13/49 [00:24<01:06, 1.86s/it]

29%|##8 | 14/49 [00:26<01:03, 1.83s/it]

31%|### | 15/49 [00:30<01:32, 2.72s/it]

33%|###2 | 16/49 [00:34<01:42, 3.12s/it]

35%|###4 | 17/49 [00:37<01:34, 2.95s/it]

37%|###6 | 18/49 [00:43<02:01, 3.92s/it]

39%|###8 | 19/49 [00:46<01:44, 3.48s/it]

41%|#### | 20/49 [00:48<01:30, 3.13s/it]

43%|####2 | 21/49 [00:51<01:27, 3.13s/it]

45%|####4 | 22/49 [00:55<01:32, 3.44s/it]

47%|####6 | 23/49 [00:57<01:16, 2.95s/it]

49%|####8 | 24/49 [00:59<01:08, 2.72s/it]

51%|#####1 | 25/49 [01:02<01:06, 2.78s/it]

53%|#####3 | 26/49 [01:03<00:51, 2.24s/it]

55%|#####5 | 27/49 [01:05<00:48, 2.19s/it]

57%|#####7 | 28/49 [01:11<01:11, 3.39s/it]

59%|#####9 | 29/49 [01:13<00:59, 2.97s/it]

61%|######1 | 30/49 [01:16<00:52, 2.77s/it]

63%|######3 | 31/49 [01:21<01:06, 3.67s/it]

65%|######5 | 32/49 [01:24<00:56, 3.30s/it]

67%|######7 | 33/49 [01:27<00:53, 3.32s/it]

69%|######9 | 34/49 [01:32<00:54, 3.64s/it]

71%|#######1 | 35/49 [01:37<00:57, 4.14s/it]

73%|#######3 | 36/49 [01:41<00:54, 4.18s/it]

76%|#######5 | 37/49 [01:42<00:37, 3.10s/it]

78%|#######7 | 38/49 [01:43<00:29, 2.68s/it]

80%|#######9 | 39/49 [01:44<00:21, 2.11s/it]

82%|########1 | 40/49 [01:45<00:15, 1.75s/it]

84%|########3 | 41/49 [01:49<00:18, 2.29s/it]

86%|########5 | 42/49 [01:52<00:18, 2.68s/it]

88%|########7 | 43/49 [01:55<00:15, 2.61s/it]

90%|########9 | 44/49 [01:56<00:10, 2.15s/it]

92%|#########1| 45/49 [01:59<00:10, 2.59s/it]

94%|#########3| 46/49 [02:00<00:06, 2.08s/it]

96%|#########5| 47/49 [02:01<00:03, 1.78s/it]

98%|#########7| 48/49 [02:03<00:01, 1.60s/it]

100%|##########| 49/49 [02:04<00:00, 1.47s/it]

100%|##########| 49/49 [02:04<00:00, 2.54s/it]

Graph.

piv = df[['N', 'PARALLEL', 'average']].pivot('N', 'PARALLEL', 'average')

ax = piv.plot(logy=True)

ax.set_title("Prediction time with and without parallelization.")

somewhere/workspace/mlprodict/mlprodict_UT_39_std/_doc/examples/plot_parallelism.py:338: FutureWarning: In a future version of pandas all arguments of DataFrame.pivot will be keyword-only.

piv = df[['N', 'PARALLEL', 'average']].pivot('N', 'PARALLEL', 'average')

Text(0.5, 1.0, 'Prediction time with and without parallelization.')

Parallelization is working.

plt.show()

Total running time of the script: ( 44 minutes 13.317 seconds)